Advancing meaningful AI systems designed to work with and for their users.

Last week, I discussed my work on Human Scale AI with the team at IA Collaborative, a global design and innovation consultancy working with Fortune 100 leaders. True to its name—Insight to Action—IA Collaborative is widely recognized for translating deep human‑centered insight into impactful products, services, and experiences. As they put it:

“AI is reshaping how companies compete, serve, and grow. But the leap from experimentation to real competitive advantage demands more than technology. It demands intelligence — designed to be collaborative. IA Collaborative serves executives who want to make bold, high-stakes moves with AI — and need a partner that can think creatively, decide strategically, and build what others won’t.”

I’m grateful to everyone who participated and to Matt Alverson for hosting a thoughtful session.

WHY HUMAN SCALE AI

Our conversation focused on a central tension facing contemporary AI systems: while technical capability is advancing at unprecedented speed and scale, many implementations still fall short at the human level by undermining trust, sensemaking, and real-world effectiveness.

These are still early days for what has become an emerging yet pervasive technology making inroads at unprecedented speed and scale. Technical acceleration alone does not guarantee meaningful progress. Much of today’s emphasis still deals with a rapid succession of technical capabilities such as model size and automation potential. However, implementations that are misaligned with human values, needs and expectations can lead to frustrating and deceiving outcomes undermining client trust and jeopardizing real business value.

Human Scale AI closes these gaps by deliberately accounting for human–machine systems that must continuously adapt across:

- Context and lifecycle: dynamic system modeling that reflects evolving user journeys rather than static tasks

- Agency and accountability: visibility, oversight, and meaningful human control at decision points

- Complementary capabilities: clear understanding of what humans do best, what machines do best, and how those roles shift over time

- Collective intelligence: federated systems that compound value across individuals, teams, and networks

- Emergent needs: the ability to surface unarticulated opportunities and future requirements as systems learn and coexist with users

It is also worth stating that understanding how to design proportionate and progressive sophistication, rather than obscure self-defeating and blindsiding complexity, is a crucial success factor. Anchoring AI design at human scale is not a new idea, it is a continuation of principles that have guided the most enduring design successes for decades.

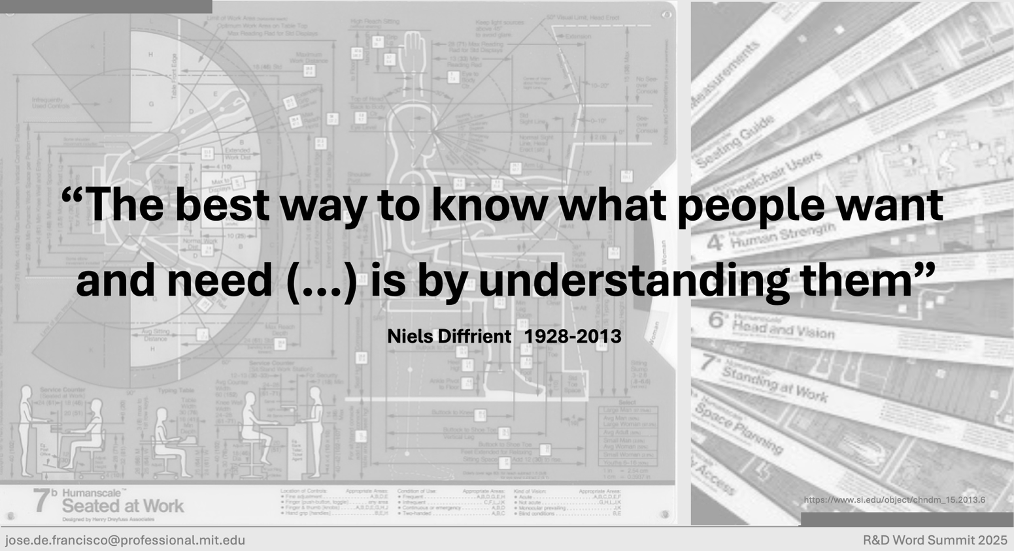

HUMANSCALE LINEAGE

This approach echoes sound human-centered design principles that have survived the test of time. Henry Dreyfuss Associates demonstrated in the mid-20th century that design quality is best attained by studying human data, culminating in the original Humanscale project, an iconic reference for those of us devoted to designing systems that work for people, instead of unintentionally against any or all of us.

IA Collaborative reissued Humanscale a decade ago, which reflects their empirical grounding, pragmatic rigor, and due respect for real users and clients. This is of the essence as AI becomes embedded in everyday life and critical systems.

THE HYBRID AI IMPERATIVE

As AI systems move from tools into collaborators embedded in real work, human‑centered principles must now be expressed not only in interfaces, but in system architectures themselves.

From an experience blueprinting standpoint, front and back stages are intertwining omnichannel capabilities and becoming more multimodal by integrating language, vision, audio, and other signals to better match human perception and natural interaction across a variety of device form factors and layered extended reality (XR) experiences.

At the infrastructure level, AI systems are moving toward hybrid deployments spanning federated architectures to better address immediacy, reliability, and sovereign, actionable governance. This shift reflects a series of pragmatic technological responses to the limitations of purely generative models as they encounter the complexities of real-life human work.

Early hybrid approaches emerged as in-context learning, where humans provide task framing and few-shot examples at runtime, followed by Retrieval-Augmented Generation (RAG), which grounded model behavior in external, authoritative knowledge. This evolution continued through the matching and orchestration of large and small models, context engineering that makes system intent explicit and operational, and agentic AI orchestration, where planning, tool use, and execution are coordinated across multiple steps rather than treated as a single model invocation.

Hybrid inference leverages both centralized cloud power and localized edge intelligence, while neurosymbolic AI combines generative capabilities with rule-based and expert reasoning systems to ensure logical consistency. More recently, protocol-level approaches such as the Model Context Protocol (MCP) have begun to formalize how models, agents, tools, and data sources interact. This enables more modular, interoperable, governable, and scalable hybrid AI systems as part of a broader convergence toward standardized, interoperable interfaces.

Recognizing that the hybrid approaches outlined here are not exhaustive, they will be explored in greater depth in a forthcoming article. In any case, AI creates enduring value not by dialing up technical prowess and maximizing automation alone, but by addressing and meeting human scale.

HUMAN SCALE AI

Last but not least, even in cases of advanced automation, these remain human–machine systems. Human‑in‑the‑loop principles echo Lean’s concept of Jidoka’s streamlined automation with a human touch, reimagined for the AI era to ensure accountability, quality, and trust.

Human Scale AI is ultimately a call to anchor innovation in first principles by designing world-class systems that are advanced enough to unlock new possibilities, yet grounded enough to be trusted, understood, and adopted. Loewy’s MAYA principle “Most Advanced Yet Acceptable” captures this balance with remarkable precision.

From Henry Dreyfuss’s Humanscale, through Lean’s principle of Jidoka, to Raymond Loewy’s MAYA, these mid‑20th‑century design foundations continue to resonate because they express something timeless: progress succeeds when intelligence advances in proportion to human values, judgment, and acceptance.

As AI becomes embedded in everyday work and critical systems, progress will belong not to those who push complexity the farthest, but to those who scale intelligence in ways humans can meaningfully engage, govern, and evolve alongside. Organizations getting that right are not only bound to outperform and outcompete others, they will define what responsible progress is all about in the AI era.

ACKNOWLEDGEMENTS

Thanks to IA Collaborative’s Matt Alverson, Dan Kraemer, Kathleen Brandenburg, Jeff Gershune and Anthony Morello for their insights and hospitality.

Leave a comment